The Thinking Human vs. The Thinking Machine

We speak of thinking machines as though thinking were a technical capacity- a matter of processing speed, memory storage, and algorithmic precision. We compare outputs, measure efficiency, and marvel at computational scale. The question often asked is whether machines can think like humans. But perhaps the more urgent question in the age of the machines is this: What kind of thinking matters in governance and human society, and can it be replicated?

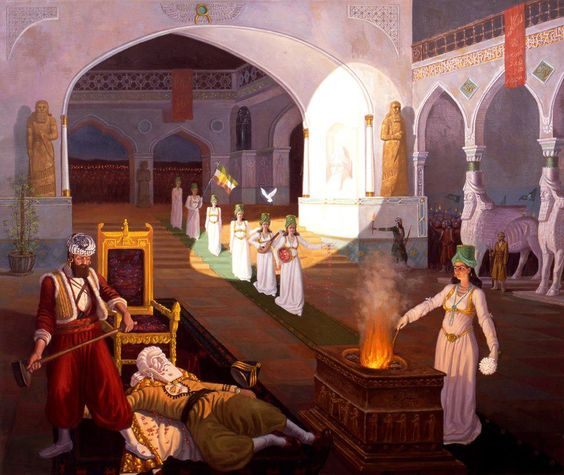

The distinction is not trivial and in public life, thinking is not merely calculation. It is judgment under uncertainty, either at war or peace times. Thinking is the ability to hold competing values in tension until a decision is reached. More importantly, it is the willingness to accept responsibility for decisions that carry moral weight beyond algorithmic calculations for what counts as right or wrong.

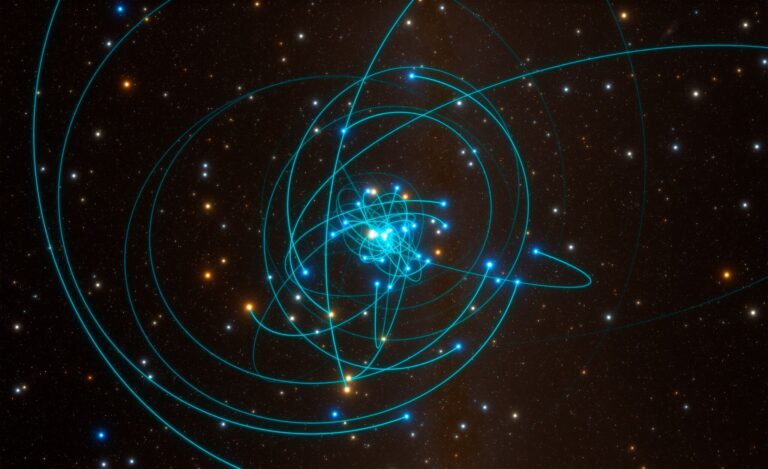

There is no question that machines process information faster by detecting patterns across enormous datasets and optimizing outcomes within defined parameters. In fact, in many domains, they outperform humans decisively. They do not tire, they do not eat or sleep. In times of decision-making, they do not hesitate, and they do not suffer from cognitive bias in the ways we do. That is called efficient and effective governance in the age of Artificial Intelligence (AI), also known as the Fourth Industrial Revolution.

In political systems, governance does not operate solely within predefined parameters based on fixed mathematical models. Political life is saturated with ambiguity and historically it is proven that decisions are rarely reducible to optimization problems- given the uniqueness of each problem as per a political system. Decisions involve trade-offs between competing goods, incomplete information, and consequences that cannot always be modeled in advance. The distinction line here is that a predictive system can estimate risk but cannot bear responsibility for the outcome. In some cases, machines not only cannot bear responsibility, they also fail to provide reasons how they reached a certain decision- a phenomenon known as “the Black Box Problem.”

This distinction becomes more consequential as artificial intelligence systems increasingly guide public decisions from resource allocation to security assessments to policy analysis. The appeal is understandable. Machine systems promise clarity in environments marked by complexity. They offer probabilities when humans struggle with doubt. They provide outputs that appear neutral, objective, and data-driven. Yet neutrality is often an illusion of framing. Every system reflects the assumptions embedded in its design. Every optimization depends on a chosen objective function. The machine calculates efficiently, but only within the boundaries we define.

Human thinking, by contrast, includes the capacity to question the boundary itself. One of the most underappreciated dimensions of human judgment is imagination. Humans can redefine the problem and step outside a given framework and ask whether the objective is legitimate in the first place. We can decide that what is efficient is not necessarily what is just and ethical. This is why human society must evolve to restructure existing systems around institutional imagination- the capacity of governance systems to anticipate long-term implications and ethically evaluate new tools. Human imagination within institutions reconsiders mathematical calculations that operates within constraints. What this means is that a system built purely on predictive logic may become highly optimized for yesterday’s patterns, but it cannot independently envision a different horizon. Nonetheless, this is not an argument against machine intelligence, it is a recognition of difference between human thinking and machine thinking. The danger is not that machines will outthink us; rather, the danger is that we may begin to mistake calculation for judgment.

As I study machines and AI systems, what I observe usually is that uncertainty in human life makes prediction seductive, given that it offers a sense of control in a world of chaos and ambiguity. In governance, especially under pressure, the temptation to rely on probabilistic systems is strong. When machines reduce complex issues to the touch of a button on dashboards, ambiguity transforms into metrics, thereby producing outputs that appear decisive.

The main argument thus is that machine prediction is not responsibility and nor can it be treated as substitutes for human judgement. When a human decision-maker errs, accountability can be traced to the person in command, who must answer for the consequences, as enshrined in International Humanitarian Law (IHL). In machine-mediated environments, responsibility becomes diffused leading to many unanswered questions: Was the outcome a result of flawed data? A biased model? An incorrect parameter setting? Or human reliance on the system itself?

The diffusion of responsibility is not merely technical. It is ethical. Governance depends on clear lines of accountability. If decision-making increasingly relies on systems that no single actor fully controls, the moral hierarchy of responsibility becomes vague and in the long-term, a war between whose thinking led to a certain decision; the human or the machine or both?

Human bias toward machines, often termed automation bias, not only blurs the lines of responsibility, they also make us feel that machine output is authoritative because it is quantitative. The recommendation feels objective because it is computational. And this is exactly where the danger lies. Over time, reliance can narrow the space for dissent. If a system indicates a high probability of risk, who wishes to argue otherwise? If an algorithm identifies a pattern, who is prepared to contest its logic? And, do we even want to go against the machines we design to lead us to the uncertain future? In this sense, the leaders of technology turn into the followers rather than the innovators that challenge systems that can disrupt human society at speed and scale.

Human thinking includes hesitation, doubt, and the burden of knowing that a decision may harm someone. Machines do not experience hesitation. They do not feel the weight of consequence given that they execute according to logic. In human systems, as governance becomes more complex, the more we value decisiveness. And the more we value decisiveness, the more attractive machine systems appear. They offer clean answers where human deliberation appears messy or incomplete. To me, messiness is not a sign of weakness, especially if It is evidence of ethical engagement. However, to a machine, delays in decisions and ethical deliberation may not likely be considered efficient.

Legitimacy and Human Thinking

In moments of crisis, societies must decide not only what is efficient, but what is legitimate. Legitimacy cannot be computed. It emerges from trust, transparency, and shared moral understanding. A decision may be technically optimal and socially corrosive at the same time. Human thinking is shaped by culture, memory, and lived experience. It absorbs historical grievances, collective aspirations, and ethical norms that do not easily translate into datasets. A system trained on data may recognize correlations; it cannot internalize meaning.

This distinction matters most when governance confronts irreversible decisions — those involving security, rights, or life itself. To delegate such decisions entirely to systems designed for pattern recognition risks narrowing the moral lens through which they are evaluated. The question, then, is not whether machines can assist human thinking. They already do. Nor is it whether computational systems will continue to expand in capability. They will.

The deeper question is whether human societies will preserve the kinds of thinking that cannot be replicated — judgment, imagination, responsibility, and the capacity to redefine objectives. A machine may calculate faster than any human. It may even identify solutions that humans overlook. But only a human can decide what is worth calculating in the first place.

Governance, ultimately, is not a problem of speed. It is a problem of direction. And direction requires a form of thinking that extends beyond pattern recognition — a thinking rooted in moral consequence and collective meaning.

The thinking machine may optimize the path. The thinking human must choose the destination.

The future will likely belong to systems where both operate together. The challenge is ensuring that calculation does not quietly displace judgment, and that efficiency does not erode responsibility.

The question is not whether machines can think. It is whether we will continue to value the kind of thinking that makes us accountable for the world we shape.